Case Study 01

MoEngage Information Architecture

Designing Structural Clarity in a Multi-Team System

Role

IA Lead + Principal Designer

Scope

Cross-functional, multi-team

Collaborators

UX Research, PM, Engineering, Data

Platform

B2B SaaS — MarTech

01 — Problem Statement

Problem Statement

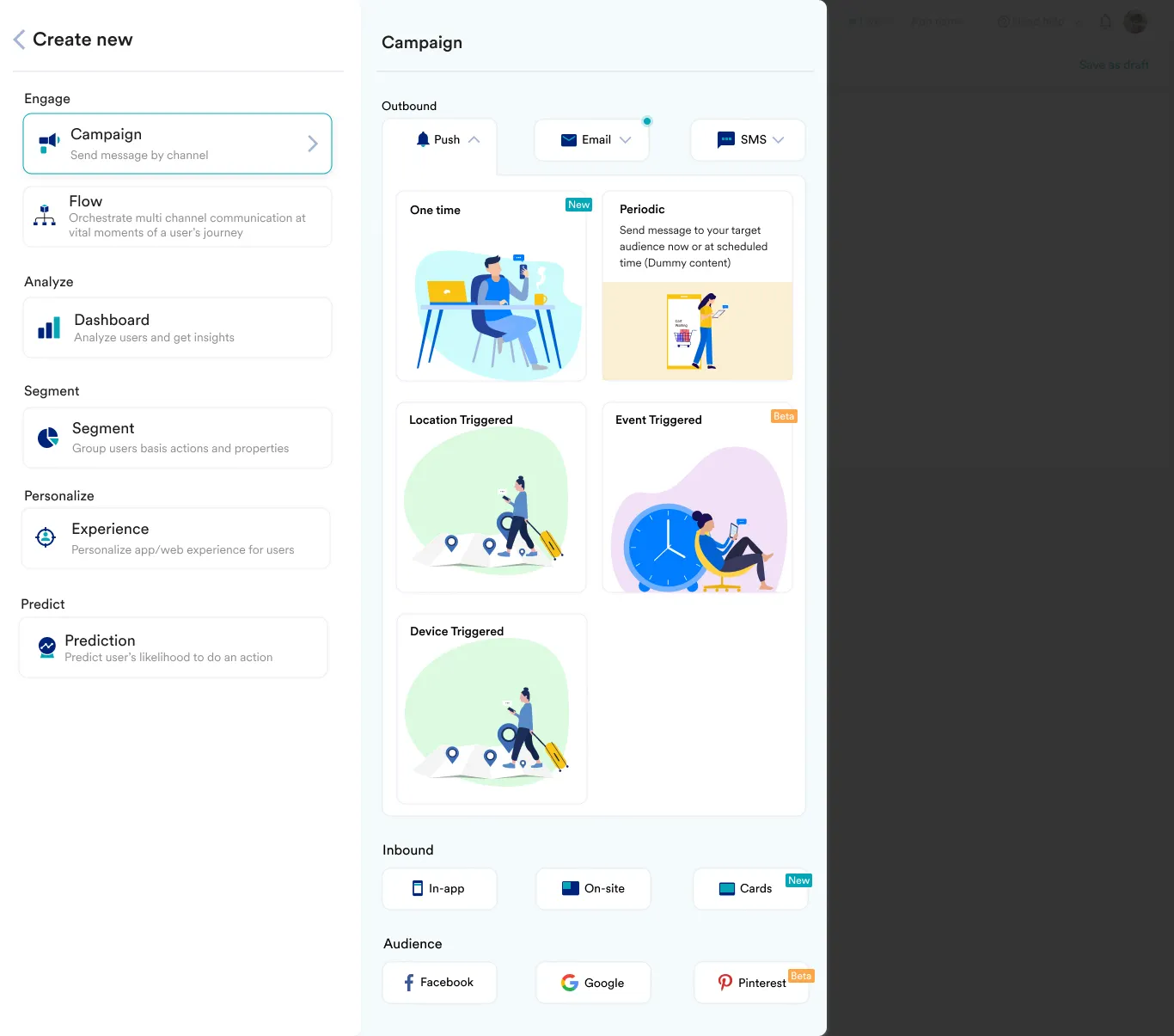

Marketers using MoEngage spent significant time navigating a fragmented menu that had grown organically over years — raising support costs, depressing CSAT, and contributing to early churn.

MoEngage is a multi-channel engagement platform. Over time, its navigation had grown organically across team boundaries. Each product area added structure independently, without a shared information model. The result was an IA that mirrored internal team structure, not user workflows.

The business cost was measurable: recurring support tickets, elevated churn signals from power users, and friction in onboarding new customers — all traceable to structural confusion, not missing features.

02 — My Role vs. Team Contribution

My Role vs. Team Contribution

03 — Why This Was Hard

Why This Was Hard

Three compounding constraints made this genuinely difficult — not technically, but organizationally.

Visibility without accountability

Everyone agreed the IA was broken. No one had the mandate to fix it — doing so meant negotiating scope across teams who each owned navigation sections and absorbing backend refactor risk.

Correctness vs. smoothness in tension

The structurally correct decision — removing Create Campaign from primary nav — would create real short-term disruption for users with six years of muscle memory.

04 — Research & Discovery

Research & Discovery

Step 01

IA Mapping

Mind-mapped the entire product IA to identify structural flaws before forming any hypothesis.

Step 02

Amplitude Analytics

Analysed navigation event data to understand real user flows — not assumed ones.

Step 03

Competitor Analysis

Studied B2B SaaS navigation structures. Formed structural hypotheses.

Step 04

Card Sorting

Open card sort with real customers. Analysed using ProvenByUsers.com.

Step 05

Hypothesis Formation

Synthesised data into workflow model. Prototyped against hypothesis.

Step 06

UAT + Beta

Internal UAT followed by beta with existing customers. Monitored via CSM.

The true user workflow is: Analyse → Segment → Campaign. The IA should reflect this sequence — not the internal team structure that built it.

05 — The Hardest Decision

The Hardest Decision

Decision

Remove Create Campaign from primary navigation

Analytics showed 94% of users create campaigns from the All Campaigns page — not from nav. Its position in primary nav was creating cognitive load without behavioral utility.

| Factor | Option A | Option B — Chosen |

|---|---|---|

| User mental model fit | Low — not a nav starting point | High — places action where behavior occurs |

| Short-term disruption | None — muscle memory preserved | High — six years of habit interrupted |

| Long-term integrity | Poor — perpetuates misaligned IA | Strong — nav reflects actual workflow |

| Assumption tested by data | No — based on assumed workflow | Yes — Amplitude confirmed the real flow |

06 — Rollout Strategy & Learning

Rollout Strategy & Learning

We rolled out to 10% of users with a Classic/New toggle. This de-risked the architectural change by limiting blast radius and generated real behavioral signal from production — not from user testing, but at scale.

What Went Wrong

Spike in confusion tickets around Create Campaign discoverability. Customers could not find it. One posted publicly about the frustration.

How It Was Corrected

Designed a unified Create button — contextual access to create campaigns, segments, or any object from the menu. Preserved the new structural logic while solving the discoverability gap.

Correct vs. revert is a meaningful distinction. The confusion spike told us where the solution was incomplete — not that the structural decision was wrong.

07 — Org-Level Impact

Org-Level Impact

- Established a shared IA standard with defined principles for how new features get placed in navigation — eliminating the organic-growth pattern that created the original problem.

- Reduced cross-team dependency chaos by making navigation ownership explicit rather than implicit and contested.

- Improved discoverability for new feature launches — teams now had a structural framework to place features within.

- Permanently reduced support load — the 50% complaint reduction was a structural fix, not a one-time improvement.

The most important outcome was not the metrics. It was converting a problem everyone could see but no one could act on into a decision that multiple teams could coordinate around.

08 — What I'd Do Differently

What I'd Do Differently

- Segment admin-heavy users earlier. We identified the 15% non-adopters post-launch. Earlier segmentation would have let us design a parallel migration path before rollout.

- Formalise the IA governance model during the project. The shared structural standard was never codified into a written framework.

- Instrument Create Campaign discoverability as a specific leading indicator pre-launch.

- Design the unified Create button earlier — contextual creation was the right solution from the start.

09 — Design Principles Demonstrated